We all know that throughout the history of search, search personalization delivered a better user experience for web search and dramatically increased conversion rates for product search. Today, the same story unfolds in the era of large models.

The Magic of Context: Why Feeding AI More Background Creates Surprising “Aha!” Moments

In recent interactions with LLM, I’ve been fascinated by the power of context. The more contextual information you provide to an AI, the higher the quality of its output—sometimes yielding unexpectedly brilliant insights. That moment when you feel it truly understands you isn’t magic; it’s proof that AI’s “surprise factor” scales with contextual depth.

Three Signals Revealing the New Frontier: Who Masters Context, Masters AI’s Superpowers

- OpenAI’s Memory Evolution

ChatGPT’s new long-term memory feature is far more than a routine update. It signifies a paradigm shift: conversational AI now remembers you—your preferences, habits, and boundaries—transforming each session from a blank slate into a continuous collaboration. No more repetitive inputs; efficiency multiplies, and user experiences leap forward. - Gemini’s Personalization Path

Google Gemini builds customized model instances per user, solving the “one-size-fits-all” dilemma. Engineers activate AI assistants pre-loaded with proprietary code libraries; financial analysts get models fluent in their company’s report structures. This is vertical context mastery in action. - Enterprise Knowledge Repositories: Redefined

When knowledge bases evolve from static archives into AI’s “core curriculum,” their strategic value explodes. An AI’s grasp of contract clauses, department jargon, or project lifecycles hinges entirely on the quality and architecture of underlying documentation. Knowledge repositories are now mission-critical AI infrastructure.

The new frontier: Moving beyond rigid knowledge graphs to build living, breathing enterprise context systems. This dynamic “cognitive bloodstream” will separate AI leaders from laggards in the next three years.

We are already seeing these applications beginning to emerge in various industries, and they will continue to bring new user experiences in the future.

- Healthcare: An AI doctor assistant remembers a patient’s complete medical history, medication reactions, allergies, and lifestyle habits, offering highly personalized diagnostic advice and health management plans. During follow-up visits, patients don’t need to repeat their history, significantly improving efficiency and accuracy. Key Point: Impact on life and health, changing the doctor-patient relationship (AI becomes a “second brain”).

- Education: Adaptive learning platform AI remembers each student’s learning progress, weaknesses, interests, and learning style, dynamically adjusting teaching content and pathways to achieve truly “tailored teaching to individual needs”. Key Point: Potential for educational equity and widespread personalization.

- Creative Industries: An AI assistant for screenwriters/designers remembers the creator’s past work styles, character settings, and world-building details, maintaining consistency in new projects and inspiring new ideas based on historical context. Key Point: AI as a creative partner, not just a tool.

- Manufacturing/Operations: As mentioned in the original article regarding operations agents, taken further: An AI system remembers the complete operational logs, maintenance history, and operating parameters of each piece of equipment, enabling more precise predictive maintenance. When a new engineer encounters a problem, the AI can directly retrieve the full context of similar past failures (not just knowledge entries). Key Point: Scalable application and transmission of experiential knowledge.

- Enterprise Decision-Making: An internal decision-support AI for executives. When discussing a project, the AI instantly retrieves and correlates all historical meeting minutes, relevant market reports, and competitor dynamics (based on prior conversations and document analysis), even reminding participants: “Regarding risk point X raised by Manager Zhang last time, what’s the current status?” Deepening Point: Exploring how “contextual continuity” shortens decision chains, reduces information gaps, avoids redundant work, and ultimately enhances organizational intelligence and strategic execution. This directly translates into a competitive edge.

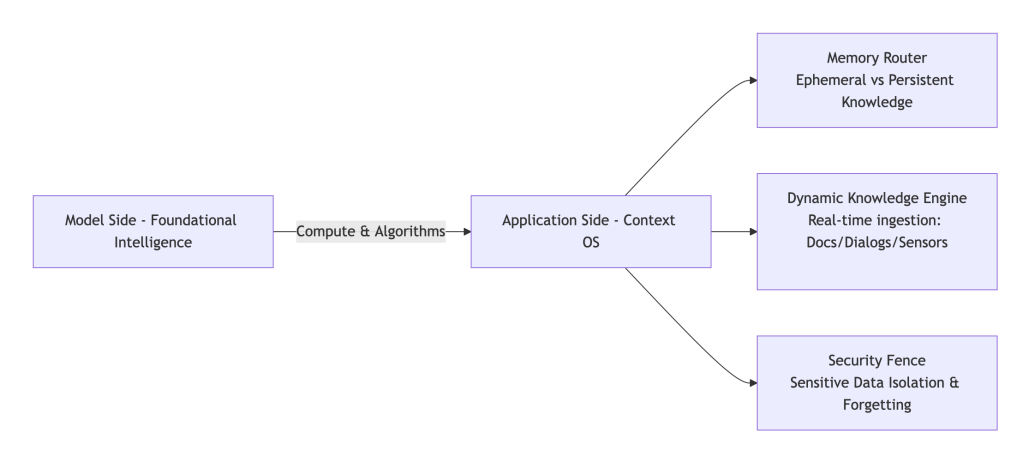

The New Division of Labor: Models Provide IQ, Applications Master Context

- Model Layer: Delivers foundational intelligence (raw computational power).

- Application Layer: Must excel at context orchestration:

- Memory Management: Intelligent storage/retrieval of contextual data.

- Interaction Design: Natural, anticipatory user experiences.

- Agent Evolution: Continuous knowledge refinement.

Core Capability Shift:

Agent intelligence will migrate from task execution to context iteration capabilities, e.g.:

- A sales bot auto-updating objection-handling playbooks after each rejection.

- An ops agent converting incident resolutions into reusable diagnostic pathways.

The Trifecta of Competitive Advantage

As model capabilities plateau, the winners will dominate three dimensions:

Leadership=Context depthMemory Density×Interaction FluencySeamless UX×Evolution speedKnowledge Velocity

Enterprise Implication:

Your application stack isn’t just a UI layer—it’s the cognitive operating system that transforms raw AI potential into sustained competitive advantage. Invest accordingly.

Leave a comment